[Feature photo above by Christiaan Triebert via flickr (CC BY 2.0).]

Have you ever heard of Math Storytelling Day? On September 25, people around the world celebrate mathematics by telling stories together. The stories can be real — like my story below — or fictional like the tale of Wizard Mathys from Fantasia and his crystal ball communication system.

Check out these posts for more information:

- Happy Math Storytelling Day

- Math Storytelling Day resources

- Moebius Noodles: Math Storytelling Day archive

My Math Story

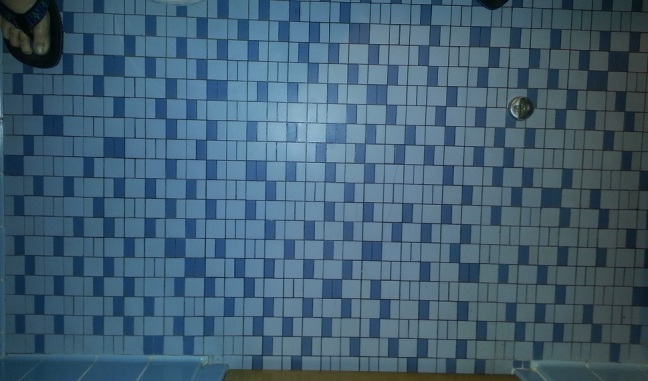

My story begins with an unexpected adventure in pain. Appendicitis sidewhacked my life last week, but that’s not the story. It’s just the setting. During my recovery, I spent a lot of time in the smaller room of my hospital suite. I noticed this semi-random pattern in the floor tile, which made me wonder:

- Did they choose the pattern to keep their customers from getting bored while they were … occupied?

- Is the randomness real? Or can I find a line of symmetry or a set of tiles that repeat?

- If I take pictures from enough different angles, could I transfer the whole floor to graph paper for further study?

- And if the nurse finds me doing this, will she send me to a different ward of the hospital? Do hospitals have psychiatric wards, or is that only in the movies?

- What is the biggest chunk of squares I could “break out” from this pattern that would create the illusion of a regular, repeating tessellation?

I gave up on the graph paper idea (for now) and printed the pictures to play with. By my definition, “broken” pattern chunks need to be contiguous along the sides of the tiles, like pentominoes. Also, the edge of the chunk must be a clean break along the mortar lines. The piece can zigzag all over the place, but it isn’t allowed to come back and touch itself anywhere, even at a corner. No holes allowed.

I’m counting the plain squares as the unit and each of the smaller rectangles as a half square. So far, the biggest chunk of repeating tiles I’ve managed to break out is 283 squares.

What Math Stories Will You Tell?

Have you and your children created any mathematical stories this year? I’d love to hear them! Please share your links in the comments section below.

* * *

This blog is reader-supported.

If you’d like to help fund the blog on an on-going basis, then please head to my Patreon page.

If you liked this post, and want to show your one-time appreciation, the place to do that is PayPal: paypal.me/DeniseGaskinsMath. If you go that route, please include your email address in the notes section, so I can say thank you.

Which I am going to say right now. Thank you!

“Math Storytelling Day: The Hospital Floor” copyright © 2014 by Denise Gaskins.

I found this math story fascinating! I like the blue and whites tiles and random patterns.

Hi, Mom! Thanks for dropping by, and I’m glad you liked my story. Not what I had planned on doing last week, but it gave me a way to pass the time…

Sorry to hear about your appendicitis, but I’m glad you managed to keep your maths head on while you were in hospital. I like your tiles story – thank you! (I also entertain myself in strange ways. I might be beside you in the psychiatric ward.)

We could have fun together, Lula. 🙂 I certainly enjoy following your family’s math story on your blog.